With the Hotspot JDK 7u40 there is a nifty new tool called Java Mission Control. Users of the nifty old tool JRockit Mission Control will recognize a lot of the features. This blog focuses on the Flight Recorder tool, and the method profiling information you can get from the flight recorder.

So you want to know why all your CPUs are saturated. Perhaps you even want to get some hints as to what changes can be done to your application to make it less CPU-hungry. Don’t despair – Java Mission Control to the rescue!

Built into the HotSpot JVM is something called the Java Flight Recorder. It records a lot of information about/from the JVM runtime, and can be thought of as similar to the Data Flight Recorders you find in modern airplanes. You normally use the Flight Recorder to find out what was happening in your JVM when something went wrong, but it is also a pretty awesome tool for production time profiling. Since Mission Control (using the default templates) normally don’t cause more than a per cent overhead, you can use it on your production server.

Getting the Data

So, to do method profiling with JMC, simply go about producing a recording like you normally would. Here is an example:

- Start the application you want to profile with the following arguments to enable the flight recorder:

-XX:+UnlockCommercialFeatures -XX:+FlightRecorder - Next start Mission Control. You can just double click on jmc in the bin folder of your 7u40 JDK.

- (Close the Welcome screen if this is your first time starting JMC.)

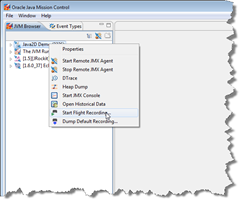

- Right click on the JVM you wish to start a flight recording on in the JVM browser and select Start Flight Recording.

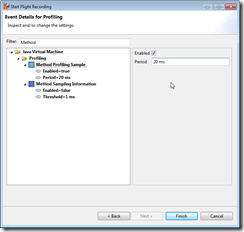

- Leave all the default settings and select the ‘Profiling – on server’ template for your Event Settings. You can usually just hit finish at this point, but I’d like to talk a bit on how you can control the method sampler.

- Click Next to go to the higher level event settings. These are groupings of named settings in the template. Here you can select how often you want JFR to sample methods by changing the Method Sampling setting.

- If this level of granularity is not enough, you can click next and modify the event settings on a per event type basis. Type Method in the filter text box.

Note that you can go back and forth between the previous wizard page to find out what the high level settings really represent. - When satisfied, click finished. The recording will be downloaded automatically and displayed in Mission Control. Click the tab group for Code to start visualizing your Method Profiling Sample events.

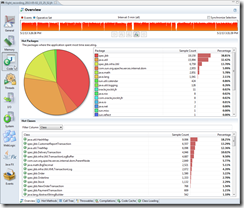

(Since Java 2D hardly produces any load on my machine, I actually switched to a SpecJBB recording here. )

)

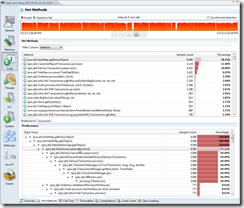

On this tab you get a overview breakdown of where the JVM is spending the most time executing your application. - Switch to the method profiling tab to find a top list of the hottest methods in your application.

The top ones are usually a good place to start optimizing.

Optimization

Once you’ve found your top methods, you either want to make sure that these methods are faster to execute, or that you call the method less. To find out how to call the method less, you normally look for ways to call along the predecessor stack traces less, and you look for the closest frame that you can change. It is quite common that the case is that some JDK core class and method is among the top methods.

Since you cannot rewrite, say, HashMap.getEntry(), you need to search along the path for something that you can change/control. In my recording, the next one is HashMap.get(), which does not help much. The next one after that might be a good candidate for optimization. An alternative would be to find somewhere along the entire path where we can reduce the number of times we need to call down to get whatever we need to get from the HashMap.

After you’ve done your optimizations you do a new recording to see if something else has popped up to the top. Notice that it really doesn’t matter exactly how much faster the method itself became. The only interesting characteristic is the relative time you spend executing that part of the Java code. It gives you a rough estimate of the maximum performance gain you can get from optimizing that method.

Command Line Flags

Aside from the normal command line flags to control the FlightRecorder (see this blog), there are some flags that are especially useful in the context of the method sampling events.

There is one flag you can use to control whether the method profiler should be available at all:

-XX:FlightRecorderOptions=samplethreads=[true|false]

There is also a flag to limit the stack depth for the stack traces. This is a performance optimization and a safety, so that the performance hit doesn’t run away, say if you have an insane stack depth and a lot of deep recursive calls. I believe it is set to 64 by default:

-XX:FlightRecorderOptions=stackdepth=<the wanted stack depth>

Limitations

The Flight Recorder method profiler is quite good at describing where the JVM is spending the the most time executing Java code at a very low overhead. There are, however, some limitations/caveats that can be useful to know about:

- If you have no CPU load, do not care too much about what the method profiling information tells you. You will get way fewer sample points, not to mention that the application may behave quite differently under load. Now, should your application be under heavy load and you still aren’t saturating the CPU, you should probably go check your latency events.

- The method profiler will not show you, or care about, time spent in native. If you happen to have a very low JVM generated CPU load and a high machine CPU load in your recording, you may be spending quite a lot of time in some native library.

Further reading and useful links

Related Blogs:

Java Mission Control Finally Released

My JavaOne 2013 Sessions

The Mission Control home page:

http://oracle.com/missioncontrol

Mission Control Base update site for Eclipse:

http://download.oracle.com/technology/products/missioncontrol/updatesites/base/5.2.0/eclipse/

Mission Control Experimental update site (Mission Control plug-ins):

http://download.oracle.com/technology/products/missioncontrol/updatesites/experimental/5.2.0/eclipse/

The Mission Control Facebook Community Page (not kidding):

http://www.facebook.com/pages/Java-Mission-Control/275169442493206

Mission Control on Twitter:

@javamissionctrl

Me on Twitter:

@hirt

[…] the last blog entry about the method profiler I showed that Mission Control can be quite useful for profiling production systems. However, I only […]

There is already sample base profiling from VisualVM, what is the advantage of Java Mission Control over the sample base profiling from VisualVM? Sound like that one is already low overhead also?

Hi Carfield,

I am not 100% sure what Visual VM uses. I do know that Google uses the unsupported AsyncGetCallTrace for their profiler. The method profiling in Java Flight Recorder is based upon AsyncGetCallTrace, but improved to meet the needs for low overhead profiling in production. This work has been of benefit for the old unsupported AsyncGetCallTrace too, as some of the work to harden the Flight Recorder profiler has gone back into the old AsyncGetCallTrace.

Aside from that, there is an immense benefit in being able to correlate what you get from the method profiler to everything else you get from the Flight Recordings.

Since Oracle is offering you both alternatives, and they are both free in development, you can simply pick the one that suits you the best.

Kind regards,

Marcus

Thanks a lot Marcus and I will try that out

Hi Marcus the Flight Recorder/Mission Control feature for Oracle Java SE looks great.

I am hoping to use the Flight Recorder to capture all method calls, by setting “Method Profiling Sample” to 0ms and “Method Sampling Information” to 0ms. I want to use this information (com.jrockit.mc.flightrecorder API), to construct sequence diagrams, and obtain an understanding of how the software under study operates.

Is this possible, or is bytecode instrumentation the only option I have?

Hi Adrian,

No, it’s not possible to use the Method Profiling events like that. A JPLIS agent (BCI) or a JVMTI agent are your best bets.

/M

Hi Marcus: My window xp 32-bit PC runs JDK1.7_45 , using mission control 5.2’s flight recorder to pinpoint heavy executing code blocks by using Hot Methods and Call Tress features. The result display of either predeceeors in Hot Methods or call tress don’t indicate the my source code line number but showing stack trace , sample count , percentage.

How difficult will that be to add showing users’ application source code line number to be part information of stack trace?

A few java profilers seem to be able to do that. I don’t know how those profilers implement that feature. Interesting enough that, most of them require JDK1.5 above.

Hi Ming,

You can get the source line even in that version of Mission Control, though it is a bit cumbersome. Simply add the samples you are interested in to the operative set, then show the operative set in the log view. Select one of the samples, and the stack trace shown in the event details will show line numbers. That said, line numbers are shown properly in all traces in JMC 5.4.0 (to be available with JDK 8u20).

Kind regards,

Marcus

Hi,

I would like to know if there is something in Java Mission Control to capture a complete call trace of a method and highlight the time taken by each method involved in that call trace.

For example, methodA() -> methodB() -> methodC(), where methodA() took xxxx ms, methodB() took xxxx ms and methodC() took xxxx ms.

Thanks.

Hi Nasir,

Well, the relative time spent in each method is indicated by the number of samples you have at each point in the aggregated stack trace. But no, we do not capture wall clock time spent in any given method. We used to have such a feature in JRockit (exact method timing/invocation counts), but only for a user selected set of methods, as the overhead to do this for all methods would be prohibitive. Since we didn’t find the feature very useful it has not been ported to HotSpot.

Kind regards,

Marcus

[…] more on JMC see – http://hirt.se/blog/?p=343, http://hirt.se/blog/?p=364, and […]

Hi Marcus,

Do you have any links to whitepaper or any other blog posts that describe the impact of running the flight recorder in a production environment. Ideally with the default recording and dump on exit approach to gather data over a sliding window.

Given it’s low overhead I’m trying to convince various architects and management folk that this is a good idea but I need to back up my assertions about overhead.

Thanks,

Rob

[…] Low Overhead Method Profiling with Java Mission Control […]

We are using EBS R12.1.3 and we Installed JDK 1.8.0_92 on Oracle Linux 6U4 (64 bit). We also upgraded JDK v7 in application and database side.

By Opening the JMC we can monitor the JMX console but we can’t able to open JFR. Its showing error like:

Flight recorder feature are not enable. To enable this you need to use a java 7U4 or leter JVM start the JVM with the Flags “-XX:+UnlockCommercialFeatures -XX:+FlightRecorder”

But in EBS R1213 instead of JVM we are using oc4j java container. I want to know where and how i can apply this command to open JFR?

Thanks and regards

Bidyadhar

It is just as it says. Start the JVM with the following flags:

-XX:+UnlockCommercialFeatures -XX:+FlightRecorder

I believe you are licensed to use JFR as part of EBS, but I would check with Oracle to make sure.

Dear Marcus,

As for the above info I have shared we are not using JVM. As we are using Oracle EBS R12.1.3 here OC4J is the java platform instead of JVM.

So please clearify me in which file i have to edit this command or how can i apply this -XX:+UnlockCommercialFeatures -XX:+FlightRecorder java option.

I don’t know much about EBS, but searching the internet, it would seem that EBS 12.1.3 was released around 6 years ago. Are you sure it is certified to run it on JDK 8? Anyways, I am not sure where the scripts are for starting OC4J. You could try searching your files for commonly used flags, such as -Xmx and -Xms to identify where the JVM flags are.

Also, you probably meant that OC4J is the JavaEE platform, instead of WLS. Any and all Java programs will run on a JVM.

Hope this helps!

Is there any way to see ABSOLUTE values (in milliseconds) instead of percentage in the Hot Methods view? I’d like to see how long the CPU spent on a particular method in comparison with other recordings.

Generally asking: What is the “Percentage” of? I guess some “CPU time” and I’d be ok if I can calculate it myself, but I can’t even find a reference value.

See the Facebook page for an ongoing discussion. 🙂